Nexus Stack: Your Data. Your Rules. Your Flow

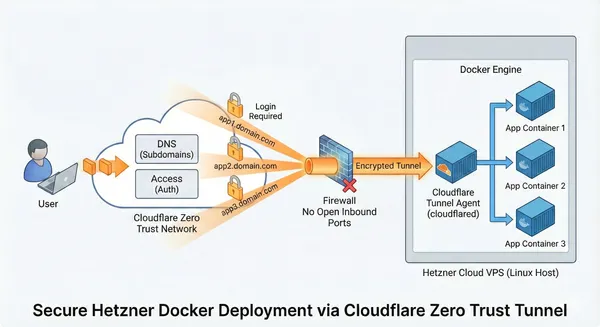

Deploy your Data Stack with Docker and Cloudflare in an automated, secure, and scalable way.

Deploy your Data Stack with Docker and Cloudflare in an automated, secure, and scalable way.

Self-hosting is awesome – you have full control over your data, no subscription fees, and you learn a lot about system administration along the way. But it comes with real challenges: security concern

If you've ever tried to learn Change Data Capture (CDC), streaming pipelines, or incremental data loading, you've probably hit the same frustrating wall I did: **static sample databases don't teach yo

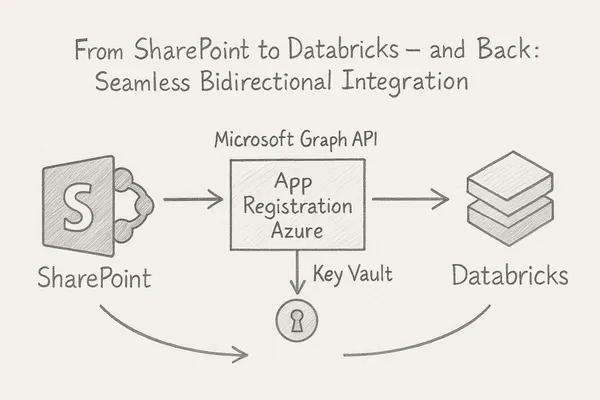

In modern data workflows, it’s essential to collect data where it originates and deliver it to where it’s needed. In this blog post, I’ll show how to connect **SharePoint directly with Databricks** –

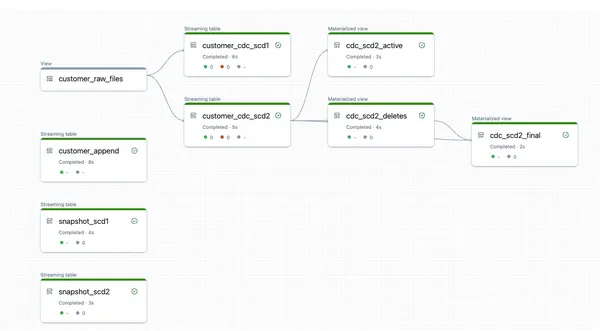

In this article I describe how to load data from recurring full snapshots with Delta Live Tables relatively easily and elegantly into a bronze table without the amount of data exploding.

In this blog post, I explore how to set up Databricks SQL Warehouse as a Linked Server in MS SQL Server to seamlessly query data from your Lakehouse directly within SQL Server.

In this step-by-step tutorial, you’ll learn how to set up an Azure DevOps pipeline for seamless deployment of Databricks asset bundles. The post walks you through the entire process—from configuring your Azure DevOps project to automating the deployment of Notebooks, Workflows, and other Databricks resources.

In this article, you will learn how to set up date and time dimensions in Databricks to enable precise time-based analyses and reports.

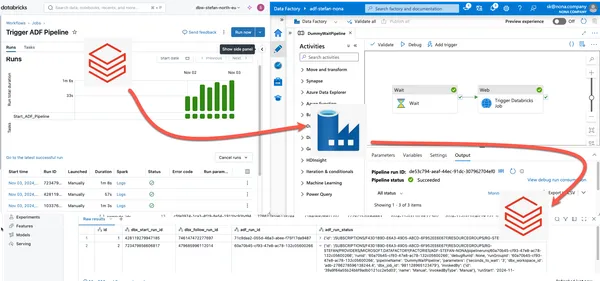

In data engineering in the Azure Cloud, a common setup is to use Azure Data Factory to orchestrate data pipelines. If you wanted to orchestrate Databricks pipelines, you had a powerful tool at hand wi

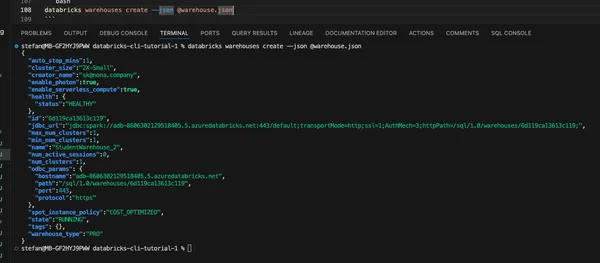

In the upcoming blog series, I will highlight different areas of the Databricks CLI with various practical examples.

I will show you different methods to authenticate with the Databricks CLI to Azure Databricks in this quick guide.

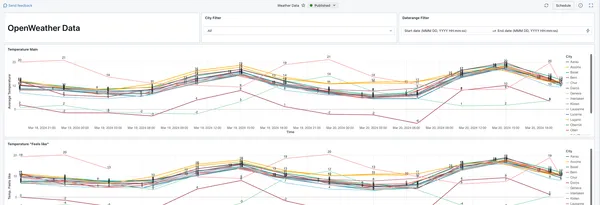

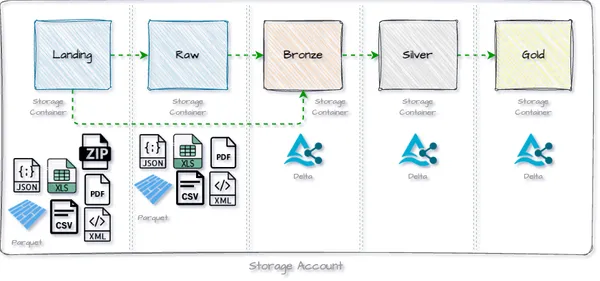

In this HowTo I will show you how to load weather data from the OpenWeather API into the Databricks Lakehouse. The data is loaded via REST API from OpenWeather and then processed in the Medallion arch

In this quick guide, I will show you how to automatically copy all schemas and tables from one catalog to another in Databricks.

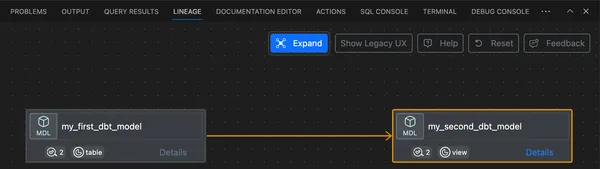

In this step-by-step guide, I will show you how to install dbt core locally in Visual Studio Code, set up the corresponding Visual Studio Code Extension and run dbt on Databricks.

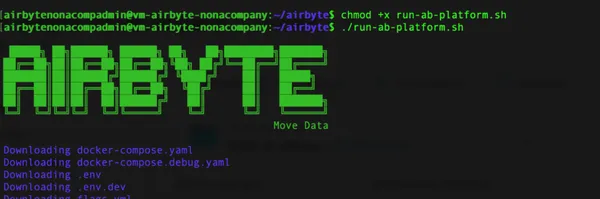

In this quick guide, I will show you how to set up an Airbyte environment in a virtual machine in Azure.

In this step-by-step guide, I describe how to create a Databricks Lakehouse environment in the Azure Cloud within 30 minutes. This guide is aimed at people who are new to Databricks and want to create

I have now decided in the old year to write on Medium as well as my personal blog.

Azure is Microsoft’s cloud computing platform. It offers a comprehensive suite of cloud services that enable companies to develop, deploy and manage applications without having to have physical hardware on site. Azure enables users to use resources such as virtual machines, storage, databases, networks and much more in Microsoft’s globally distributed data centers. The Azure Cloud is one of the leading hyper-scalers on the market. In the following article, I would like to give beginners a few useful tips to make it easier to get started with the Azure portal.

In this quick guide I will show you how to connect to a Databricks SQL Warehouse Cluster with DBeaver.